Latest Updated Microsoft DP-300 certification: Administering Relational Databases on Microsoft Azure | Exam Method

Cloud databases aren’t slowing down—and neither is the demand for professionals who know how to manage them properly. As of December 2025, Microsoft Azure continues to dominate enterprise cloud adoption, and companies are actively hunting for skilled Microsoft Azure database administrators who can keep mission-critical data secure, available, and optimized.

The DP-300 certification was officially updated on June 18, 2025, meaning anyone preparing now is studying content that directly matches real-world Azure workloads. If you’ve been waiting for the right moment to validate your database skills, this is it. Let’s break down what’s new, how the DP-300 exam method works, and how to pass it with confidence.

What’s New in the Latest DP-300 Certification Updates

Microsoft periodically updates role-based certifications to align with platform changes—and DP-300 is no exception. The DP-300 latest updates reflect modern Azure database operations, automation, and security best practices.

Key points to know:

- The DP-300 exam was updated on June 18, 2025

- The exam now fully aligns with the current skills outline

- Candidates are strongly advised to review the official study guide regularly

You should always verify updates through the official source:

👉 Microsoft Learn DP-300 exam page

Official DP-300 Exam Overview

The DP-300 exam, officially titled Administering Relational Databases on Microsoft Azure, measures your ability to implement and manage SQL-based solutions in Azure.

This exam validates skills across:

- Azure SQL Database

- Azure SQL Managed Instance

- SQL Server on Azure Virtual Machines

It’s a core requirement for earning the Microsoft Certified: Azure Database Administrator Associate credential.

DP-300 Exam Format and Method Explained

Understanding the DP-300 exam method removes uncertainty—and that alone boosts your confidence.

Question Types You Should Expect

The exam uses a mix of:

- Multiple-choice questions

- Multiple-response (select all that apply)

- Drag-and-drop scenarios

- Case studies

- Interactive lab-style components

These questions focus on real administrative decisions, not memorization.

Exam Duration, Scoring, and Delivery Options

| Item | Details |

|---|---|

| Number of Questions | ~40–60 |

| Exam Duration | ~100 minutes |

| Passing Score | 700 / 1000 |

| Delivery | Pearson VUE (Online or Test Center) |

Skills Measured in the DP-300 Exam (Latest Blueprint)

Microsoft clearly defines what you’ll be tested on. Below is the current DP-300 skills outline, straight from the official study guide.

Skill Group Breakdown Table

| Skill Group | Weight |

|---|---|

| Plan and implement data platform resources | 20–25% |

| Implement a secure environment | 15–20% |

| Monitor, configure, and optimize database resources | 20–25% |

| Configure and manage automation of tasks | 15–20% |

| Plan and configure a high availability and disaster recovery (HA/DR) environment | 20–25% |

Source:

👉 DP-300 study guide

Plan and Implement Data Platform Resources

You’ll need to understand:

- Azure SQL deployment options

- Compute and storage configuration

- Pricing tiers and scaling decisions

Implement a Secure Environment

Expect questions on:

- Azure AD authentication

- Role-based access control (RBAC)

- Data encryption and auditing

Monitor, Configure, and Optimize Database Resources

Focus areas include:

- Performance tuning

- Query optimization

- Index strategies

- Monitoring with Azure tools

Configure and Manage Automation of Tasks

This section covers:

- Azure Automation

- Scheduled jobs

- Maintenance plans

- PowerShell and CLI basics

Plan and Configure HA/DR Environments

Critical skills include:

- Failover groups

- Backup strategies

- Geo-replication

- Business continuity planning

Who Should Take the DP-300 Certification

The DP-300 certification is ideal for:

- Azure database administrators

- SQL Server professionals moving to Azure

- Cloud engineers managing data platforms

- IT professionals targeting Azure-focused roles

If you already work with relational databases, DP-300 is a natural next step.

How to Prepare for the DP-300 Exam Effectively

Official Microsoft Learn Resources (Priority #1)

Microsoft provides free, high-quality learning paths:

- Role-based modules

- Hands-on labs

- Practice assessments

Start here:

👉 Microsoft Learn DP-300 exam page

Hands-on Practice with Azure

Theory alone won’t cut it. Use:

- Azure free account

- SQL Database and Managed Instance labs

- Backup, restore, and failover testing

Hands-on experience often makes the difference between passing and failing.

Practice Tests and Exam Simulation

Many candidates also use third-party practice materials to simulate the exam experience. Leads4Pass is a popular option that offers updated DP-300 practice questions with explanations, available in PDF and VCE formats.

👉 Check out Leads4Pass for additional practice materials: https://www.leads4pass.com/dp-300.html

Let’s take a look at some of the latest DP-300 exam questions!

Latest DP-300 exam questions and answers

| Number of exam questions | Complete exam materials |

| 15 (Free) | 376 Q&A (PDF,VCE,PDF+VCE) |

Question 1:

You need to recommend a solution to ensure that the customers can create the database objects. The solution must meet the business goals.

What should you include in the recommendation?

A. For each customer, grant the customer ddl_admin to the existing schema.

B. For each customer, create an additional schema and grant the customer ddl_admin to the new schema.

C. For each customer, create an additional schema and grant the customer db_writer to the new schema.

D. For each customer, grant the customer db_writer to the existing schema.

Correct Answer: B

Question 2:

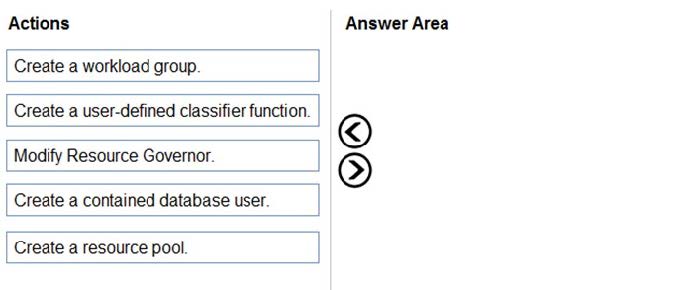

DRAG DROP

You have an Azure SQL managed instance named SQLMI1 that has Resource Governor enabled and is used by two apps named App1 and App2.

You need to configure SQLMI1 to limit the CPU and memory resources that can be allocated to App1.

Which four actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Select and Place:

Correct Answer:

Question 3:

You have SQL Server on an Azure virtual machine.

You need to add a 4-TB volume that meets the following requirements:

1.

Maximizes IOPs

2.

Uses premium solid state drives (SSDs) What should you do?

A. Attach two mirrored 4-TB SSDs.

B. Attach a stripe set that contains four 1-TB SSDs.

C. Attach a RAID-5 array that contains five 1-TB SSDs.

D. Attach a single 4-TB SSD.

Correct Answer: B

Question 4:

Which audit log destination should you use to meet the monitoring requirements?

A. Azure Storage

B. Azure Event Hubs

C. Azure Log Analytics

Correct Answer: C

Explanation:

Scenario: Use a single dashboard to review security and audit data for all the PaaS databases.

With dashboards can bring together operational data that is most important to IT across all your Azure resources, including telemetry from Azure Log Analytics.

Note: Auditing for Azure SQL Database and Azure Synapse Analytics tracks database events and writes them to an audit log in your Azure storage account, Log Analytics workspace, or Event Hubs.

Reference:

https://docs.microsoft.com/en-us/azure/azure-monitor/visualize/tutorial-logs-dashboards

Question 5:

You have an instance of SQL Server on Azure Virtual Machines named VM1.

You plan to schedule a SQL Server Agent job that will rebuild indexes of the databases hosted on VM1.

You need to configure the account that will be used by the agent. The solution must use the principle of least privilege.

Which operating system user right should you assign to the account?

A. Increase scheduling priority

B. Log on as a service

C. Profile system performance

D. Log on as a batch job

Correct Answer: D

Explanation:

To configure a SQL Server Agent job on an instance of SQL Server on Azure Virtual Machines (VMs) to rebuild indexes of the databases hosted on the VM, you need to assign the Log on as a batch job user right to the account that will be used by the agent.

Therefore, the correct answer is D. Log on as a batch job.

Question 6:

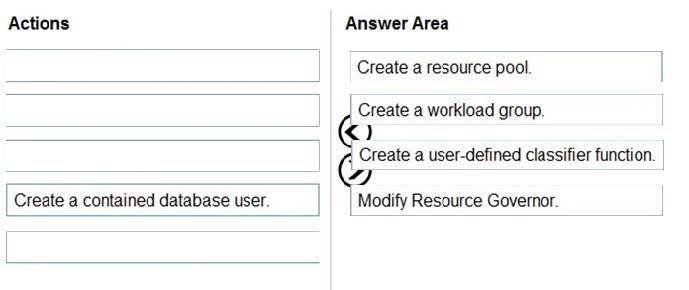

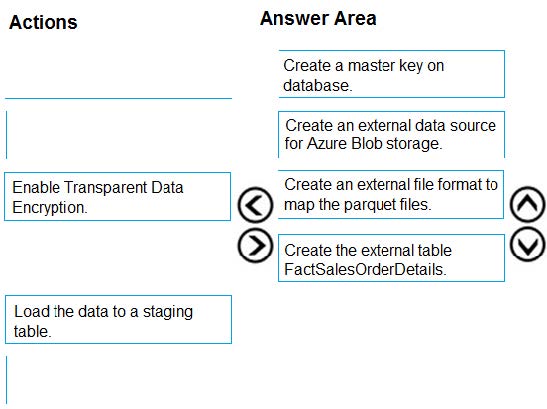

DRAG DROP

You are creating a managed data warehouse solution on Microsoft Azure.

You must use PolyBase to retrieve data from Azure Blob storage that resides in parquet format and load the data into a large table called FactSalesOrderDetails.

You need to configure Azure Synapse Analytics to receive the data.

Which four actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Select and Place:

Correct Answer:

Explanation:

To query the data in your Hadoop data source, you must define an external table to use in Transact-SQL queries. The following steps describe how to configure the external table. Step 1: Create a master key on database.

1.

Create a master key on the database. The master key is required to encrypt the credential secret.

(Create a database scoped credential for Azure blob storage.)

Step 2: Create an external data source for Azure Blob storage.

2.

Create an external data source with CREATE EXTERNAL DATA SOURCE..

Step 3: Create an external file format to map the parquet files.

3.

Create an external file format with CREATE EXTERNAL FILE FORMAT.

Step 4. Create an external table FactSalesOrderDetails

4.

Create an external table pointing to data stored in Azure storage with CREATE EXTERNAL TABLE.

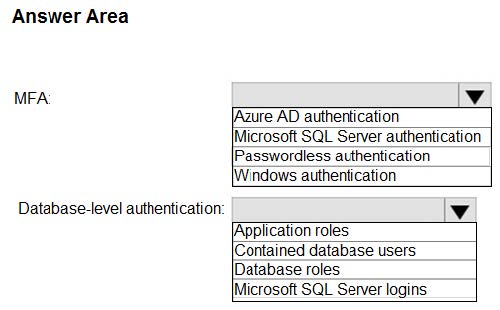

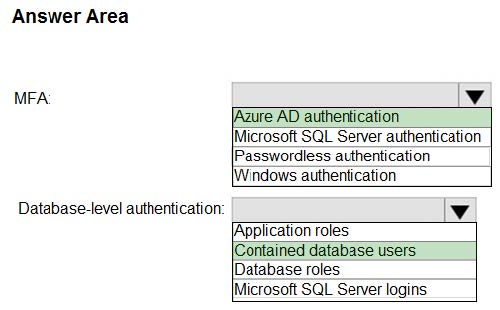

Question 7:

HOTSPOT

You have an Azure subscription that is linked to a hybrid Azure Active Directory (Azure AD) tenant. The subscription contains an Azure Synapse Analytics SQL pool named Pool1.

You need to recommend an authentication solution for Pool1. The solution must support multi-factor authentication (MFA) and database-level authentication.

Which authentication solution or solutions should you include in the recommendation? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Hot Area:

Correct Answer:

Explanation:

Box 1: Azure AD authentication

Azure Active Directory authentication supports Multi-Factor authentication through Active Directory Universal Authentication.

Box 2: Contained database users

Azure Active Directory Uses contained database users to authenticate identities at the database level.

Incorrect:

SQL authentication: To connect to dedicated SQL pool (formerly SQL DW), you must provide the following information:

1.

Fully qualified servername

2.

Specify SQL authentication

3.

Username

4.

Password

5.

Default database (optional)

Question 8:

You have two on-premises Microsoft SQL Server 2019 instances named SQL1 and SQL2.

You need to migrate the databases hosted on SQL1 to Azure. The solution must meet the following requirements:

1.

The service that hosts the migrated databases must be able to communicate with SQL2 by using linked server connections.

2.

Administrative effort must be minimized. What should you use to host the databases?

A. a single Azure SQL database

B. an Azure SQL Database elastic pool

C. SQL Server on Azure Virtual Machines

D. Azure SQL Managed Instance

Correct Answer: D

Explanation:

Linked servers enable the SQL Server Database Engine and Azure SQL Managed Instance to read data from the remote data sources and execute commands against the remote database servers (for example, OLE DB data sources) outside of the instance of SQL Server.

Linked servers are available in SQL Server Database Engine and Azure SQL Managed Instance. They are not enabled in Azure SQL Database singleton and elastic pools.

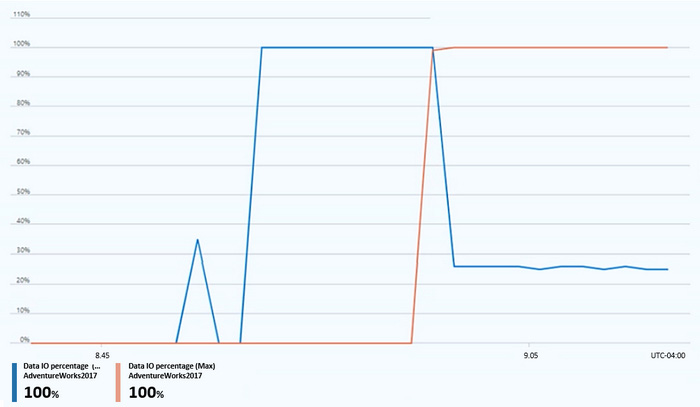

Question 9:

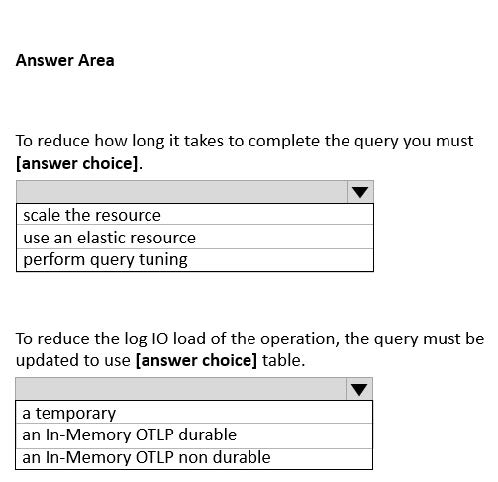

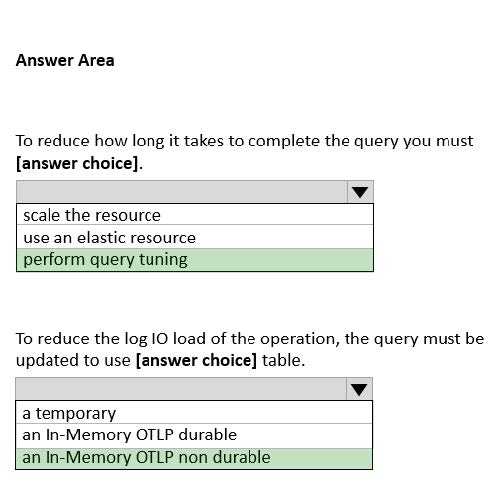

HOTSPOT

You have an Azure SQL database named that contains a table named Table1.

You run a query to bad data into Table1.

The performance Of Table1 during the load operation are shown in exhibit.

Hot Area:

Correct Answer:

Question 10:

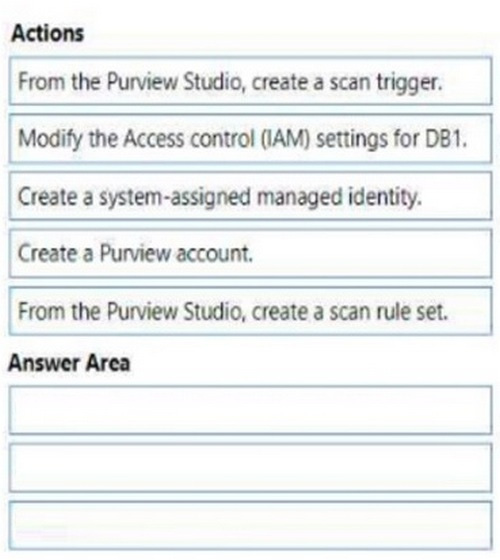

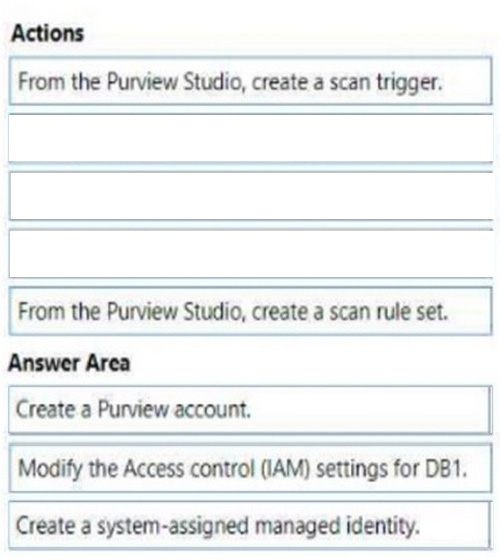

DRAG DROP

You have an Azure subscription that contains an Azure SQL database named DB1.

You plan to perform a classification scan of DB1 by using Azure Purview.

You need to ensure that you can register DB1.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Select and Place:

Correct Answer:

Explanation:

Step 1: Create a Purview account.

Prerequisites

An Azure account with an active subscription. Create an account for free.

An active Microsoft Purview account.

You\’ll need to be a Data Source Administrator and Data Reader to register a source and manage it in the Microsoft Purview governance portal.

Step 2: Modify the Access control (IAM) settings for DB1.

A collection is a tool that the Microsoft Purview Data Map uses to group assets, sources, and other artifacts into a hierarchy for discoverability and to manage access control. All accesses to the Microsoft Purview governance portal\’s

resources are managed from collections in the Microsoft Purview Data Map

Step 3: Create a system-assigned managed identity.

Authentication for a scan

To scan your data source, you\’ll need to configure an authentication method in the Azure SQL Database.

The following options are supported:

*

System-assigned managed identity (Recommended) – This is an identity associated directly with your Microsoft Purview account that allows you to authenticate directly with other Azure resources without needing to manage a go-between user or credential set.

*

Etc.

Reference: https://docs.microsoft.com/en-us/azure/purview/register-scan-azure-sql-database

Question 11:

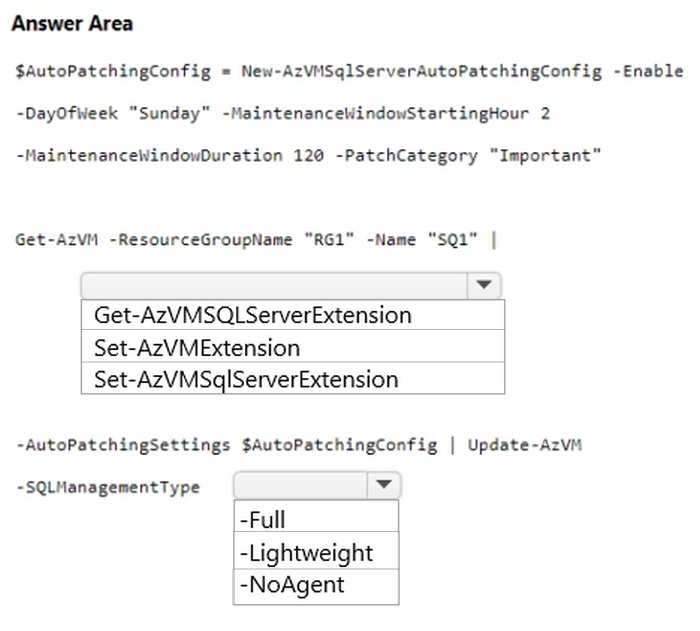

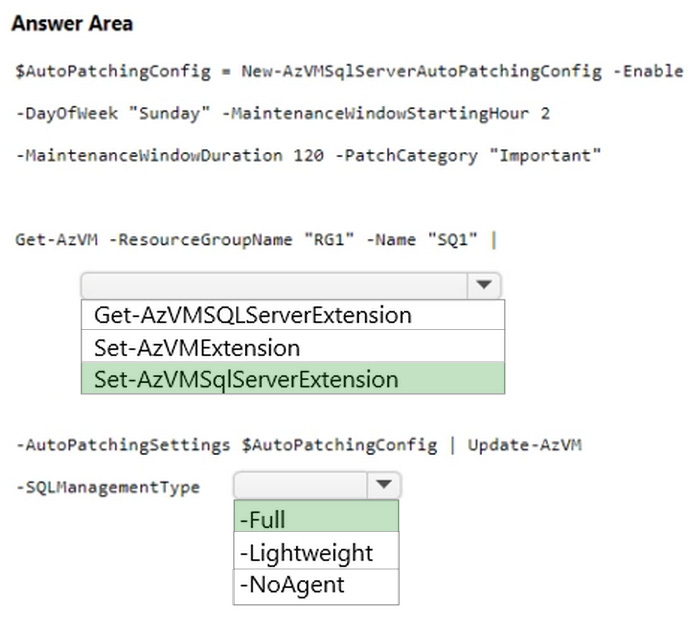

HOTSPOT

You have an Azure subscription that contains a resource group named RG1. RG1 contains an instance of SQL Server on Azure Virtual Machines named SQL

You need to use PowerShell to enable and configure automated patching for SQL The solution must include both SQL Server and Windows security updates.

How should you complete the command? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Hot Area:

Correct Answer:

Explanation:

Box 1: Set-AzVMSqlServerExtension

The Set-AzVMSqlServerExtension cmdlet sets the AzureSQL Server extension on a virtual machine.

Example: Set automatic patching settings on a virtual machine.

$AutoPatchingConfig = New-AzVMSqlServerAutoPatchingConfig -Enable -DayOfWeek “Thursday” -MaintenanceWindowStartingHour 11 -MaintenanceWindowDuration 120 -PatchCategory “Important”

Get-AzVM -ResourceGroupName “testrg” -Name “VirtualMachine11” | Set-AzVMSqlServerExtension -AutoPatchingSettings $AutoPatchingConfig | Update-AzVM

The first command creates a configuration object by using the New-AzVMSqlServerAutoPatchingConfig cmdlet. The command stores the configuration in the $AutoPatchingConfig variable. The second command gets the virtual machine

named VirtualMachine11 in the Resource Group testrg by using the Get-AzVM cmdlet. The command passes that object to the current cmdlet by using the pipeline operator. The current cmdlet sets the automatic patching settings in

$AutoPatchingConfig for the virtual machine. The command passes the virtual machine to the Update-AzVM cmdlet.

Box 2: -Full

The solution must include both SQL Server and Windows security updates.

Reference:

https://learn.microsoft.com/en-us/powershell/module/az.compute/set-azvmsqlserverextension

Question 12:

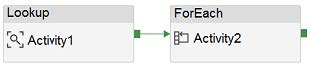

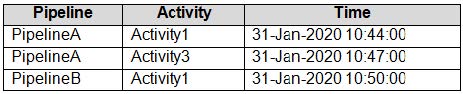

HOTSPOT

You have an Azure data factory that has two pipelines named PipelineA and PipelineB.

PipelineA has four activities as shown in the following exhibit.

PipelineB has two activities as shown in the following exhibit.

You create an alert for the data factory that uses Failed pipeline runs metrics for both pipelines and all failure types. The metric has the following settings:

1.

Operator: Greater than

2.

Aggregation type: Total

3.

Threshold value: 2

4.

Aggregation granularity (Period): 5 minutes

5.

Frequency of evaluation: Every 5 minutes

Data Factory monitoring records the failures shown in the following table.

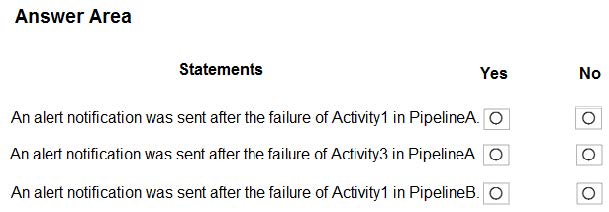

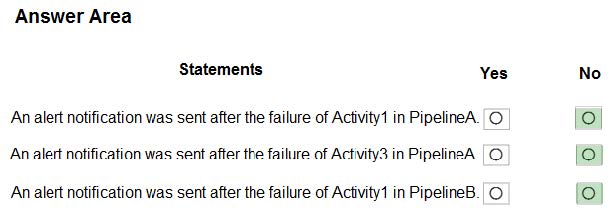

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

Hot Area:

Correct Answer:

Explanation:

Box 1: No

Just one failure within the 5-minute interval.

Box 2: No

Just two failures within the 5-minute interval.

Box 3: No

Just two failures within the 5-minute interval.

Reference:

https://docs.microsoft.com/en-us/azure/azure-monitor/alerts/alerts-metric-overview

Question 13:

You have an Azure data solution that contains an enterprise data warehouse in Azure Synapse Analytics named DW1.

Several users execute adhoc queries to DW1 concurrently.

You regularly perform automated data loads to DW1.

You need to ensure that the automated data loads have enough memory available to complete quickly and successfully when the adhoc queries run.

What should you do?

A. Assign a smaller resource class to the automated data load queries.

B. Create sampled statistics to every column in each table of DW1.

C. Assign a larger resource class to the automated data load queries.

D. Hash distribute the large fact tables in DW1 before performing the automated data loads.

Correct Answer: C

Explanation:

The performance capacity of a query is determined by the user\’s resource class. Smaller resource classes reduce the maximum memory per query, but increase concurrency. Larger resource classes increase the maximum memory per

query, but reduce concurrency.

Reference:

Question 14:

Your on-premises network contains a Microsoft SQL Server 2016 server that hosts a database named db1.

You have an Azure subscription.

You plan to migrate db1 to an Azure SQL managed instance.

You need to create the SQL managed instance. The solution must minimize the disk latency of the instance.

Which service tier should you use?

A. Hyperscale

B. General PurpAose

C. Premium

D. Business Critical

Correct Answer: D

Explanation:

General purpose has disk latency, Business critical puts the logs on SSD, Premium is a DTU offering which is not supported on Azure SQL MI, Hyperscale is not on Managed Instance

Question 15:

You have an Azure SQL database named db1 on a server named server1.

You need to modify the MAXDOP settings for db1.

What should you do?

A. Connect to db1 and run the sp_configure command.

B. Connect to the master database of server1 and run the sp_configurecommand.

C. Configure the extended properties of db1.

D. Modify the database scoped configuration of db1.

Correct Answer: D

Reference: https://docs.microsoft.com/en-us/azure/azure-sql/database/configure-max-degree-of-parallelism

…

Using Practice Questions the Right Way

Practice questions should help you:

- Identify knowledge gaps

- Improve time management

- Get familiar with exam wording

Avoid memorization. Focus on why an answer is correct.

DP-300 Study Guide 2025: Recommended Learning Path

A smart DP-300 study guide 2025 approach looks like this:

- Review the official skills outline

- Complete Microsoft Learn modules

- Practice in Azure

- Test yourself with practice questions

- Review weak areas and repeat

Consistency beats cramming every time.

Common DP-300 Exam Mistakes to Avoid

- Ignoring HA/DR topics

- Underestimating security questions

- Skipping hands-on labs

Time Management Tips for Exam Day

- Don’t rush the first questions

- Flag tough questions and return later

- Case studies are time-heavy—plan accordingly

- Leave 5–10 minutes for review

Career Benefits of Becoming a Microsoft Azure Database Administrator

With DP-300, you position yourself for:

- Higher-paying cloud roles

- Better job security

- Recognition as an Azure specialist

- Stronger credibility with employers

DP-300 vs Other Azure Certifications

DP-300 is more specialized than AZ-104 or AZ-305. If databases are your focus, DP-300 delivers deeper, job-ready validation.

Final Preparation Checklist Before Booking the Exam

- ✅ Reviewed official study guide

- ✅ Completed Microsoft Learn paths

- ✅ Practiced in Azure

- ✅ Attempted practice exams

- ✅ Understood exam format

Once ready, book through Pearson VUE.

Conclusion: Is DP-300 Worth It in 2025?

Absolutely. The latest updated DP-300 certification aligns perfectly with today’s Azure database workloads. With the exam updated in June 2025, preparing now means learning skills employers actually need. Focus on official Microsoft resources, back them up with hands-on practice, and use practice questions wisely. If you’re serious about becoming a Microsoft Azure database administrator, DP-300 is still one of the smartest moves you can make.

Frequently Asked Questions (FAQs)

1. What is the passing score for the DP-300 exam?

The passing score is 700 out of 1000.

2. When was the DP-300 exam last updated?

The English exam was updated on June 18, 2025.

3. How many questions are on the DP-300 exam?

Typically between 40 and 60 questions.

4. What is the best way to prepare for DP-300?

Start with Microsoft Learn, add hands-on Azure practice, then use practice questions for assessment.

5. Is DP-300 suitable for beginners?

It’s best suited for candidates with basic SQL and Azure experience.